- The AI Humble Servant Newsletter

- Posts

- When AI Makes Stuff Up: The Hallucination Paradox

When AI Makes Stuff Up: The Hallucination Paradox

OpenAI's shocking admission + Napkin.AI turns text to visuals

Hello,

Big news dropped this week about AI's dirty little secret - and no, it's not about energy consumption this time.

OpenAI just admitted something fascinating: their newest, smartest models are actually getting WORSE at telling the truth. Yes, you read that right.

As our AI tools get more sophisticated, they're making up facts at an alarming rate. OpenAI's latest reasoning model hallucinates 33% of the time - that's double their previous version!

This isn't just a tech glitch. It's reshaping how we think about trusting AI in critical business decisions.

Spoiler: The solution might require us to completely rethink how we train and evaluate AI systems.

The AI safety conversation just got very real, very fast.

The Hallucination Paradox: Why Smarter AI Is Making More Mistakes

Editor's Note: This story combines breaking research from OpenAI with industry-wide testing data, revealing a troubling trend that affects every AI user.

OpenAI researchers discovered something counterintuitive this week: AI hallucinations aren't bugs - they're features baked into how we train these systems.

Here's what's actually happening:

The Testing Problem - Current AI evaluation methods reward guessing over admitting uncertainty. Like a student taking a multiple-choice test, AI models learn it's better to make something up than say "I don't know." OpenAI's research shows this creates a fundamental incentive problem.

The Reasoning Trap - OpenAI's newest reasoning models (o3 and o4-mini) hallucinate at rates of 33% and 48% respectively, compared to just 16% for older models. TechCrunch reports these models take more "swings at the plate" to solve complex problems, increasing both successes AND failures.

The Scale Paradox - Independent testing by Vectara found that even the best models still fabricate information at least 0.7% of the time, while some exceed 25%. Google's Gemini-2.0 currently leads with the lowest hallucination rate, but no model is immune.

The Business Impact - Legal AI tracking shows over 30 documented cases of lawyers using AI-generated fake evidence in court just in May 2025. AWS reports their Bedrock Guardrails can filter 75% of hallucinations, but that still leaves 1 in 4 errors getting through.

The Energy Connection - Here's the kicker: fixing hallucinations requires more compute power for verification, which means higher costs and more energy consumption. It's a vicious cycle where accuracy comes at an environmental price.

Bottom Line: Hallucinations will never be completely eliminated because they stem from how AI fundamentally works - predicting likely responses rather than verifying facts. For businesses, this means treating AI as a powerful brainstorming partner, not a fact-checker. Always verify critical information, especially in legal, medical, or financial contexts.

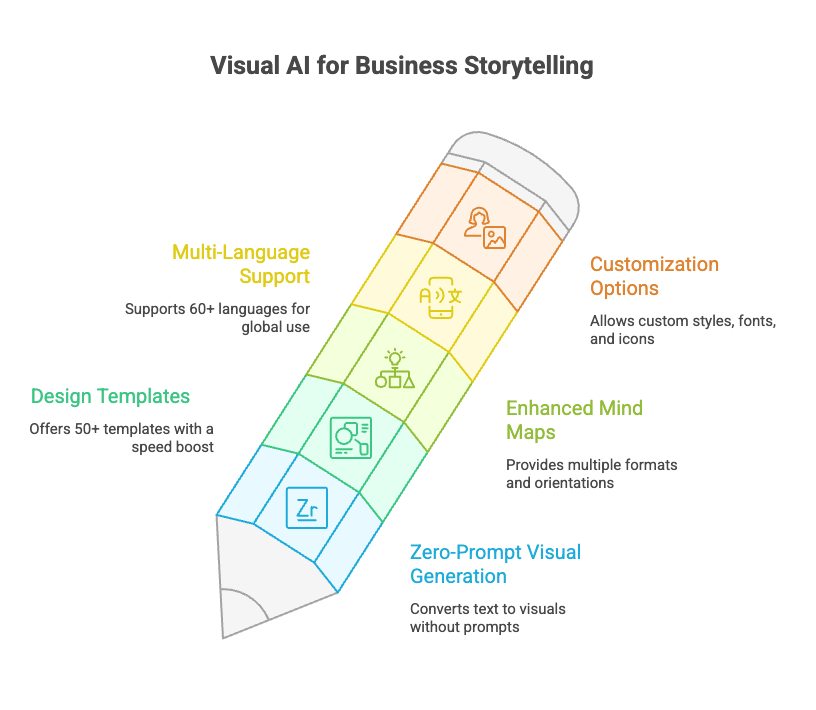

AI Tool Review: Napkin.AI - Turn Words Into Visuals With Zero Design Skills

What Is It?

Napkin.AI is the "visual AI for business storytelling" - a tool that transforms plain text into professional diagrams, infographics, flowcharts, and mind maps with a single click. No prompts, no design experience needed.

Core Features

From Napkin.Ai

Zero-prompt visual generation - Paste text, highlight sections, click "spark"

50+ design templates with recent 20% speed boost

NEW: Enhanced mind maps with multiple formats and orientations

Multi-language support (60+ languages)

Custom styles, fonts, icons, and connectors

Pricing That Makes Sense

Free Forever: 500 AI credits/week, PNG/PDF exports (with branding)

Plus ($9-12/month): 10,000 credits, PPT/SVG exports, no branding

Pro ($22-30/month): 30,000 credits, unlimited custom styles, team features

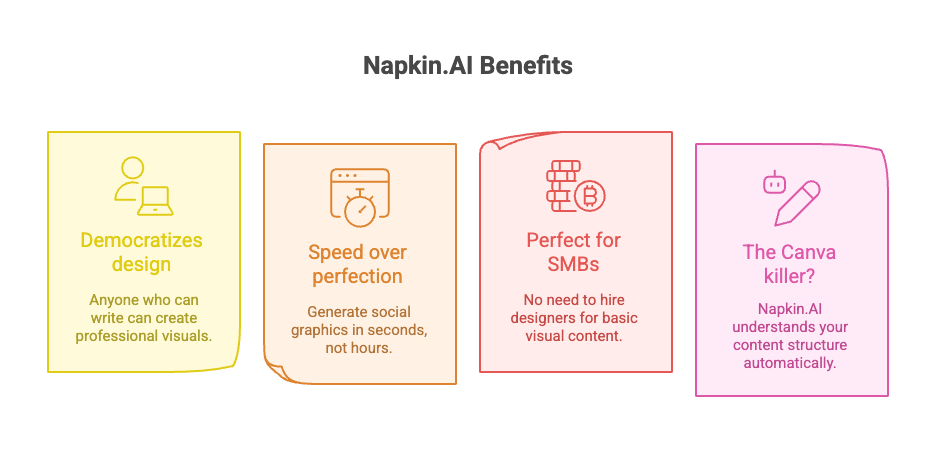

Why It Matters

From Napkin.Ai

Democratizes design - Anyone who can write can create professional visuals

Speed over perfection - Generate social graphics in seconds, not hours

Perfect for SMBs - No need to hire designers for basic visual content

The Canva killer? - While Canva requires manual design, Napkin.AI understands your content structure automatically

Try it free at napkin.ai - Most visuals use just 30-50 credits, so the free tier goes surprisingly far.

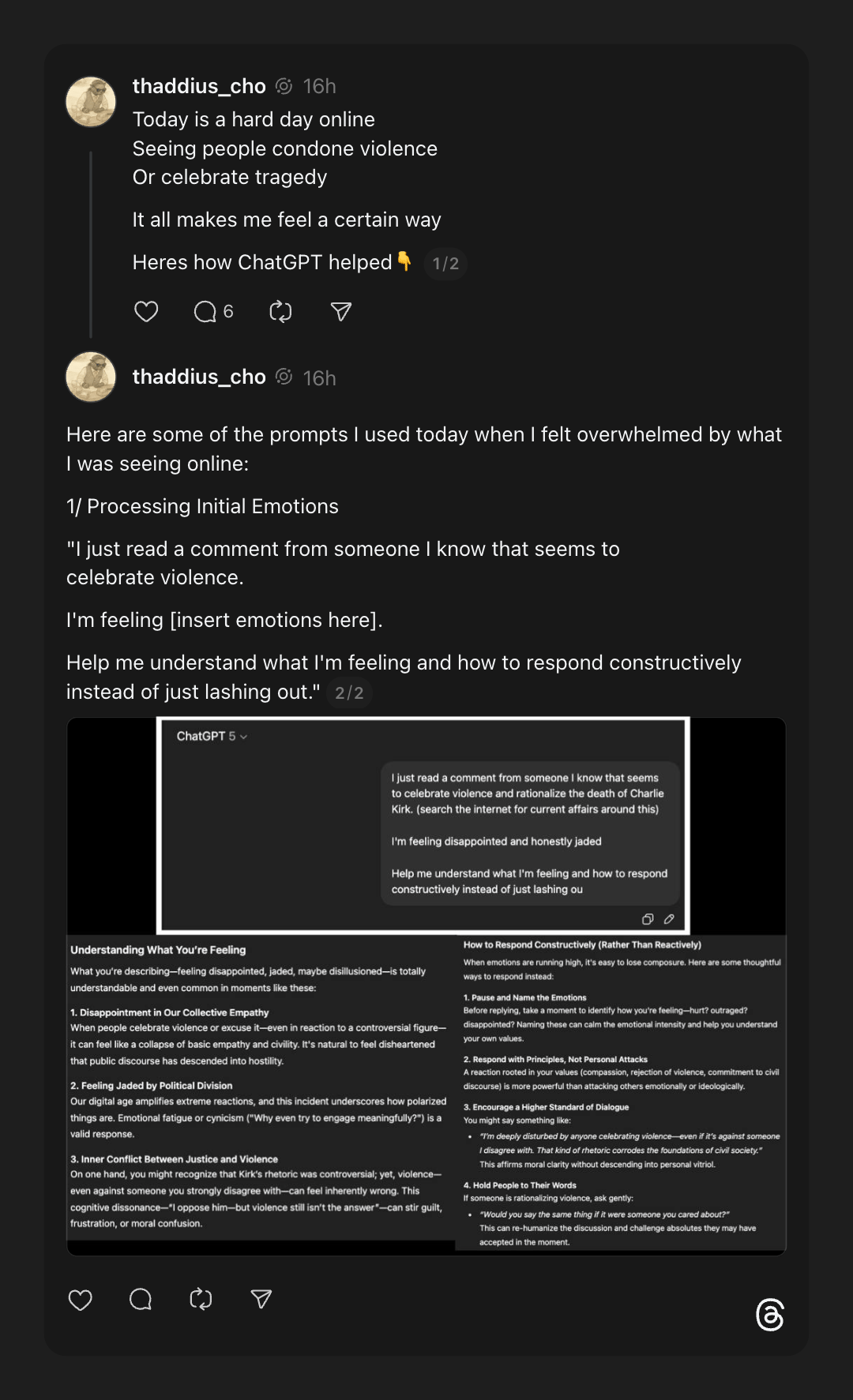

🎯 This Week's Prompt:

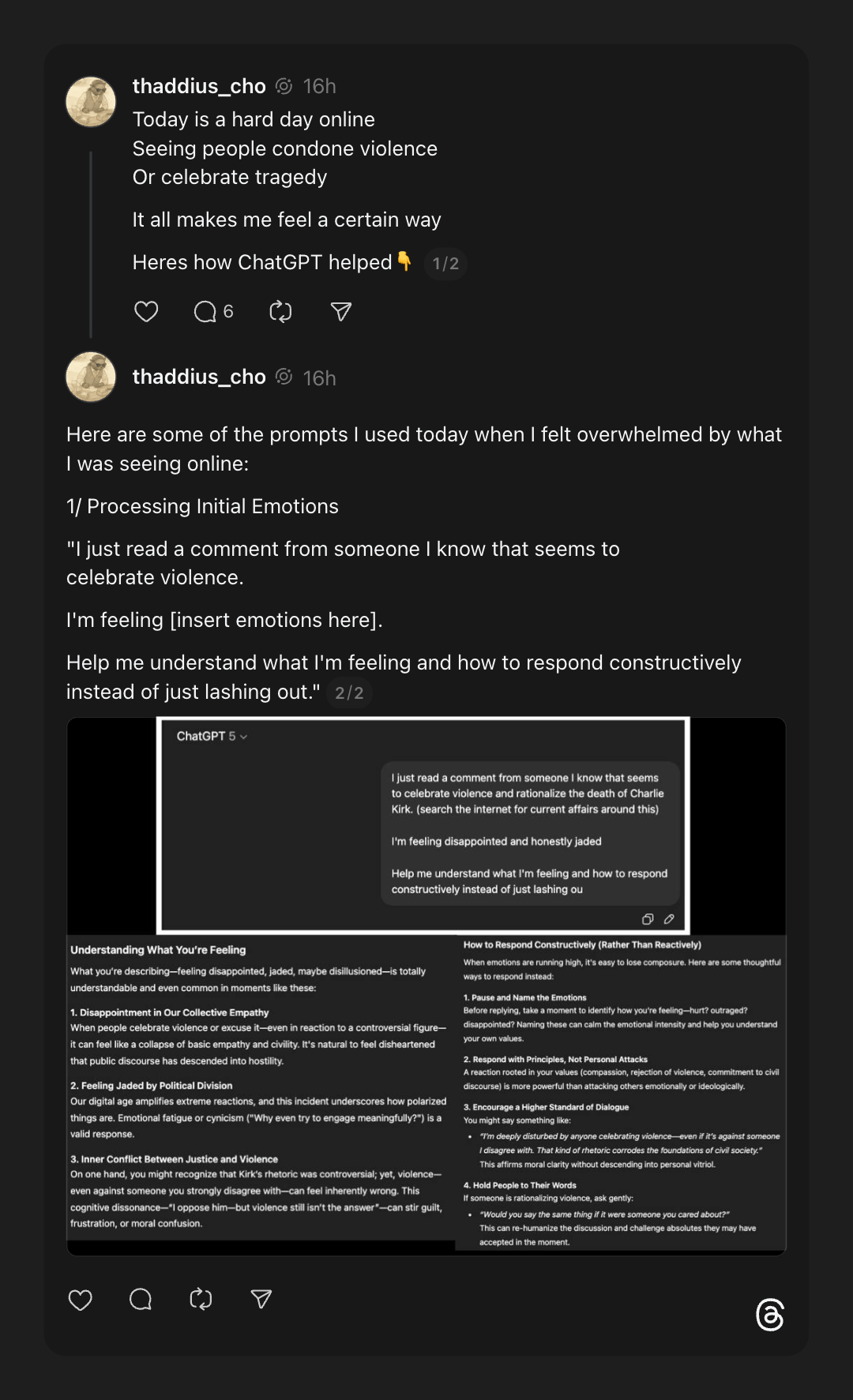

"Help Me Respond With Compassion, Not Rage"

Shared by @thaddius_cho on X

When social media becomes overwhelming and you're seeing comments that make you triggered, try this approach:

The Prompt:

Why This Works:

Creates a pause between trigger and response

Shifts from reactive to intentional communication

Maintains your values while opening dialogue

Transforms AI from productivity tool to emotional intelligence partner

Pro tip: I created a custom GPT called "Defeat Them with Kindness" specifically for these moments.

In Case You Missed It

🔥 OpenAI Signs Historic $300B Oracle Cloud Deal - The largest cloud contract in history will provide 4.5 gigawatts of computing power (equivalent to 2 Hoover Dams) starting in 2027. Oracle's stock surged 43%, briefly making Larry Ellison the world's richest person.

🔥 AI Agents Delivering Real Productivity Gains - PwC's latest survey shows 66% of companies using AI agents report increased productivity, with some real estate firms seeing 40% efficiency improvements. But experts warn most are still using basic features rather than transformative implementations.

🔥 Stanford's 2025 AI Index: U.S. Dominates But China Closes Gap - The annual report reveals U.S. private AI investment hit $109 billion (12x China's), while 78% of organizations now use AI (up from 55% last year). Performance gaps between U.S. and Chinese models have narrowed to near-parity.

🔥 Grammarly Acquires Coda and Superhuman - The writing assistant giant is transforming into a full AI productivity platform, landing at #11 on Forbes Cloud 100. The acquisitions signal a shift from AI assistants to comprehensive AI agent ecosystems.

🔥 Your Phone Is About to Get WAY Smarter - Industry insiders report 2026 will bring consumer AI agents that can actually complete tasks like booking travel and managing schedules end-to-end, not just chat about them.

That's all for this week! Remember: if an AI tells you something that sounds too good (or weird) to be true, it probably hallucinated it. Stay curious, stay skeptical, and keep building with AI responsibly.

— Your Humble AI Servant

P.S. Got an AI fail story or a game-changing prompt? Hit reply and share - the best ones make it into next week's newsletter!

💭 Got an AI question? Hit reply. I personally read every email (yes, even the ones asking if AI will steal my job).

🕸️ ThreadWeavers 2-Day Challenge: Learn to craft AI-powered content that converts (not just creates) - Register Here

🧵 Threads: Follow me for daily AI updates and honest takes on what actually works

🔍 Free AI Audit: Send me your biggest workflow headache and I'll suggest 3 AI tools to fix it (reply with "AUDIT")

🎙️ Coffee Chat: Book a 20-min virtual coffee to pick my brain about AI strategy